Questioning the Conventional Wisdom on Liability and Open Source Software

To improve cybersecurity, open source software should not be completely exempt from software liability.

A key and occasionally fiery thread of the debate around software liability has been the role of open source software. Most modern software applications are, under the hood, more than 80 percent open source software: software whose source code is free to inspect, modify, and distribute and that is often maintained by volunteers. What are the potential liability-related obligations of companies and open source software developers with respect to the open source software in a software product? This question has become even more salient because of the recent XZ Utils backdoor, in which a popular open source software project was compromised by a malicious project maintainer.

A key and occasionally fiery thread of the debate around software liability has been the role of open source software. Most modern software applications are, under the hood, more than 80 percent open source software: software whose source code is free to inspect, modify, and distribute and that is often maintained by volunteers. What are the potential liability-related obligations of companies and open source software developers with respect to the open source software in a software product? This question has become even more salient because of the recent XZ Utils backdoor, in which a popular open source software project was compromised by a malicious project maintainer.

There are at least three beliefs embedded in this debate that have become the majority opinion, none without merit but all worthy of scrutiny. First, open source software developers should bear no legal responsibility for the software they create, modify, and distribute. Second, some analysts, when discussing a liability regime and open source software, have advanced the idea that liability should focus primarily on preventing companies from shipping products with open source components that are known to have vulnerabilities or obvious code flaws, especially vulnerabilities that are known to have been exploited. Third, if and when software liability becomes law and covers open source software included in a product, then companies will finally invest substantially in the open source software ecosystem.

This piece interrogates each claim, drawing in part on our experience as software developers and in part on our background in empirical computer security research, and offers three counterclaims.

Counterclaim #1: Malicious open source software developers should potentially bear legal responsibility for malicious behavior.

It’s a bedrock principle that open source software is provided “as is.” In fact, some, perhaps most, open source software advocates view open source code as an expression of free speech. In other words, open source software maintainers should, in this view, not be held responsible for flaws or problems (or anything, really) related to their code. These views are bolstered by a widespread belief that liability for open source software developers would hinder innovation and economic growth. For instance, during the debate over the European Union’s Cyber Resilience Act and specifically the act’s lacking a clause that exempts open source software developers form liability, one commentator wrote:

Holding open source developers whose components end up in commercial products liable for security issues will stifle innovation and harm the EU economy without providing a substantive improvement in security.

Most moderate voices on open source software liability argue that “final goods assemblers” should be the party responsible for the security of open source. That’s legalese for software companies. The idea is that the companies that presumably profit from the use of the open source software within their products should be liable, including for security bugs in the open source software included in their products.

These are rational views. Numerous critical, security-sensitive open source projects are maintained by volunteers, and placing liability on these maintainers could potentially discourage further contribution and weaken an already-stretched open source ecosystem.

But there is an edge case: Should open source software developers that knowingly distribute malicious open source software also be exempt from liability? This isn’t an academic question. The recent XZ backdoor, which happened in between the first draft of this article and its publish date, points to the fact that malicious open source software is a real threat. Estimating conservatively, there have been hundreds, if not thousands or even tens of thousands, of malicious open source software packages distributed via popular open source package repositories, the open source software equivalent of Apple’s App Store. And the threat is not particularly new either. For instance, in 2018, open source software users discovered that one open source project (event-stream) with over 2 million downloads per week was harvesting account details from Bitcoin wallets. Though this attack affected only a small set of Bitcon users, this episode points to the possibility that future attacks could compromise the privacy of millions of users in a wider context.

An absolutist view that open source software is provided “as is” implies that open source software developers can distribute even malicious open source software. There has never, to our knowledge, been a legal case against an entity or individual linked to these malicious packages. Purists presumably believe that “as is” clauses should offer protection or perhaps believe that any harm caused can be covered by existing laws such as the Computer Fraud and Abuse Act. Skeptics could think that this legal boundary—assuming attribution is possible in such cases—needs to be tested.

Counterclaim #2: Liability related to open source software should potentially include avoiding end-of-life software.

Jim Dempsey expertly summarizes an emerging position that there should exist a software liability “floor” that explicitly defines software “dos” and “dont’s”. Dempsey argues that some practices, like hardcoded passwords, are so obviously negligent that they could help define a standard of care. His analysis stresses the usefulness of a standard that includes avoiding vulnerabilities known to have been exploited (such as those on the Cybersecurity and Infrastructure Security Agency’s known exploited vulnerabilities catalog) or weaknesses associated with the so-called OWASP Top 10, a consensus-driven list of the most critical security risks for web applications. This focus on avoiding known vulnerabilities and insecure coding practices is welcome, but it raises the question of what practices should be included as “insecure,” an area that is surprisingly devoid of systematic research.

Should such an effort to define a floor gain steam, there is one practice, sometimes overlooked, that is increasingly getting attention: including end-of-life (EOL) open source software components in an application. EOL open source software components are no longer supported, by either the open source maintainers or the organization associated with that particular component. The problem is that these components no longer receive security updates. Consequently, when a vulnerability is discovered and announced, there will be no easy way to patch the vulnerability. Importantly, EOL open source software components are not a theoretical danger. One analysis of the top 10 open source packages across eight programming language ecosystems found two examples of EOL components (“abandonware” in their terminology.)

Fortunately there is a nascent movement, embodied in open source projects, startups, and fledgling government initiatives, to identify, prevent, and replace EOL open source software components. It’s therefore worth considering adding the inclusion of EOL open source software into software applications as a software “don’t”. This will naturally create questions about what exactly EOL open source software is: It can be hard to determine if a project is EOL, poorly maintained, or simply stable as a rock. But those questions are no more vexing than many others related to open source software security.

Counterclaim #3: Software liability laws will not necessarily lead to broad corporate investment in the open source software ecosystem.

Most discourse assumes implicitly that, should companies face liability for the security flaws in the open source code they use, they will use their financial and engineering resources to improve the security and health of the open source projects they use, implicitly assuming that these companies will continue to use the same open source software projects. For instance, past analysis (including by one of the co-authors) makes this case. Some authors, for instance, argue:

[Clarifying liability] ... would encourage further growth in the role played by bigger, well-resourced software vendors in improving the security of commonly used open source packages.

It’s plausible. But there are other possible outcomes. For example, companies could reduce their use of open source software, depend on fewer packages, and write more of their own code. This would be a big change, but software liability is, relative to the status quo, a radical idea. Radical consequences seem at least plausible.

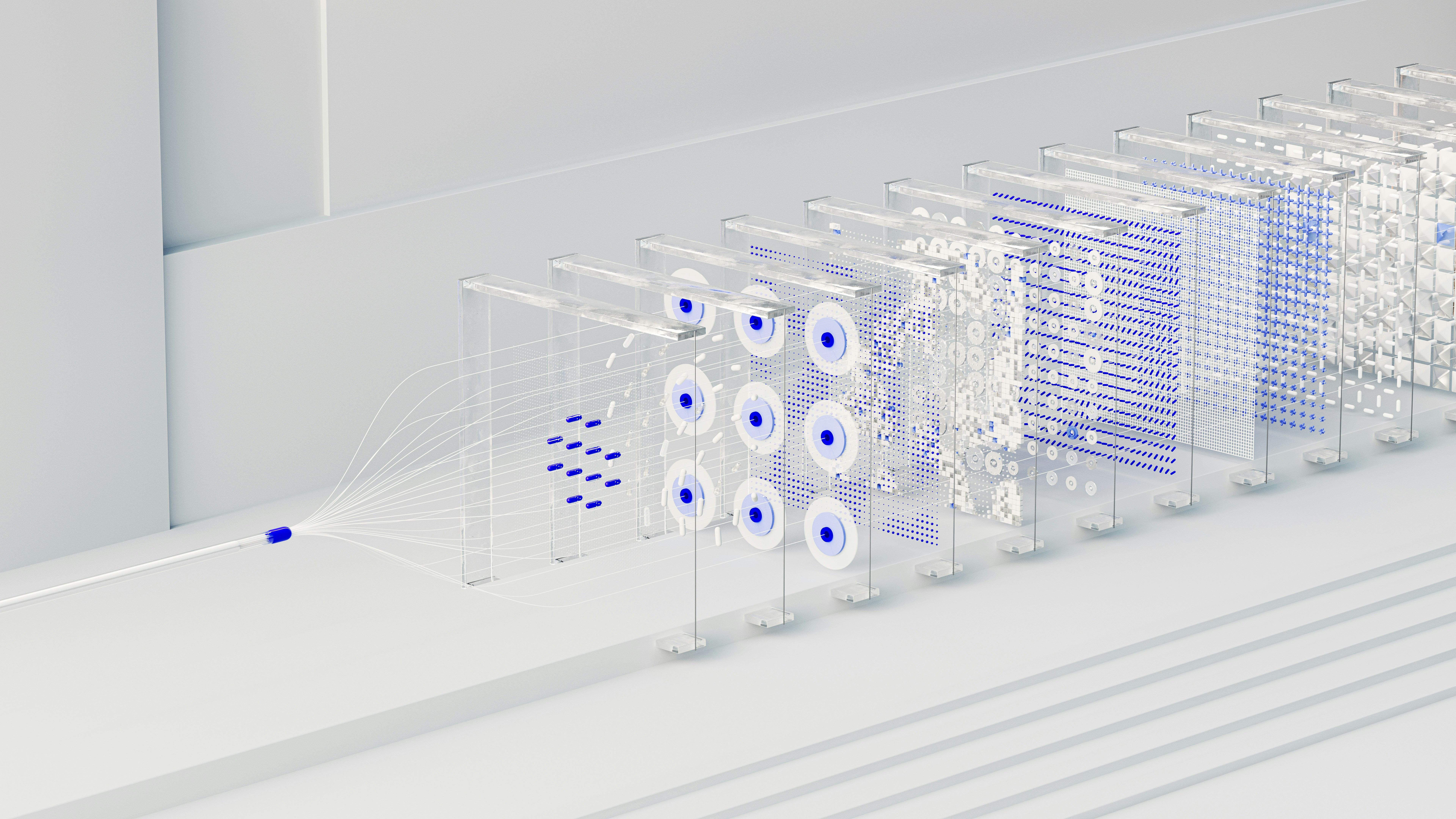

Another, likelier outcome is the rise of walled open source gardens. Instead of downloading code from unvetted GitHub code repositories and free-for-all nonprofit package registries (actions that most software developers, including ourselves, do all the time), software developers would be forced by company policy, itself a result of liability laws, to use only open source software on a preapproved list. This list could be maintained internally, or it could be a list of dependencies that are vetted by a trusted third party.

This sort of risk transfer, using walled open source gardens, arguably already exists, though it isn’t necessarily widespread. For example, Anaconda, a Python development environment for machine learning and data science, does this for a select group of widely used Python packages. Other companies do this for key Linux distribution packages too, but there is much room for expanding these walled gardens and making the “walls” higher. There are now hundreds of thousands of widely used open source packages across many programming language ecosystems. Unfortunately, state-of-the-art techniques for detecting open source package malware and for proactively detecting open source vulnerabilities leave much to be desired. On the bright side, Linux distributions like Wolfi are trying to be an open source walled garden of the future.

* * *

Should software liability ever become likely, legislators will need to grapple with the role of open source software within such a regime. This is because modern software applications are, by some estimates, over 80 percent open source software. Part of this process will require analysts to confront assumptions that have mostly been implicit, such as the claim that placing liability on software companies as “final assemblers” will lead to broad investments across the current open source ecosystem. Should these assumptions not be as solid as hoped for, then it’s possible that advocates of software liability will be surprised by unforeseen consequences. And anyone who has developed software knows software is nothing if not full of unforeseen consequences.