What the Defense Production Act Can and Can’t Do to Anthropic

The legal answer depends on what the government is actually demanding—and the statute's ambiguities cut both ways.

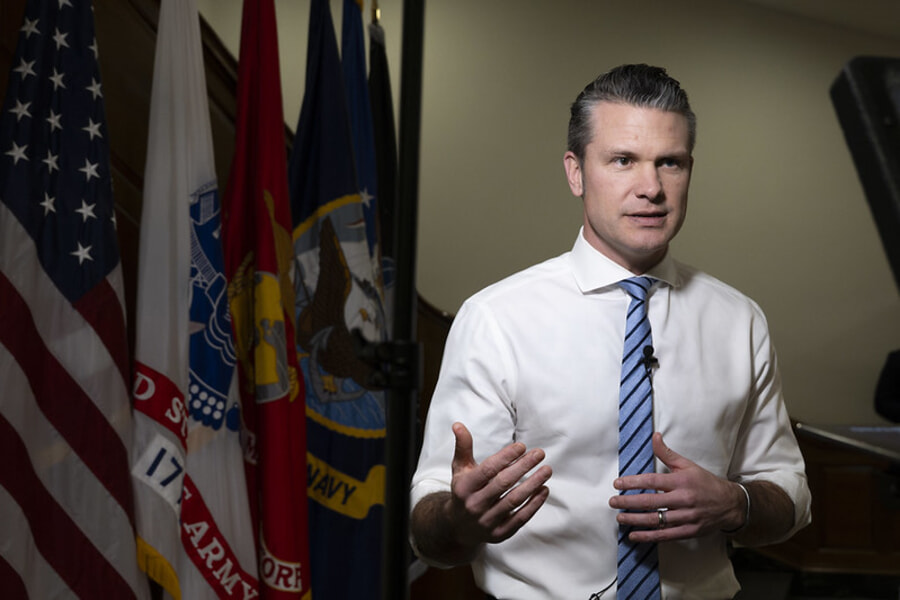

On Tuesday, Feb. 24, Defense Secretary Pete Hegseth met with Anthropic CEO Dario Amodei and threatened to invoke the Defense Production Act (DPA) if Anthropic doesn't agree to the Pentagon’s terms by Friday. The DPA, Hegseth warned, would let the government compel Anthropic to provide its technology on the Pentagon's terms. Anthropic is resisting allowing its artificial intelligence (AI) to be used for autonomous weapons or mass surveillance—two red lines that the company has maintained since entering the defense market.

I argued last week that Congress—not the Pentagon or Anthropic—should set the rules for military AI. The DPA threat makes that case stronger. But first, it's worth understanding what the DPA can actually do here, because the answer depends entirely on what the government is demanding. The legal analysis is genuinely complicated: Different demands raise very different legal questions, and a statute whose core compulsion powers were designed for steel mills and tank factories maps awkwardly onto a dispute about AI safety guardrails.

The DPA and AI

The DPA is a Korean War-era statute that gives the president broad authority to direct private industry in the name of national defense. It has been extended many times since its enactment, most recently through September 2026.

The DPA already applies to AI. The Biden administration's since-rescinded Executive Order 14110, Section 4.2, invoked the DPA to require AI companies to report on training activities, red-team results, and model weights. But President Biden used Title VII, which contains the DPA's information-gathering authority. Based on the available reporting, Hegseth is likely threatening Title I—the statute's core compulsion power. That's an enormous escalation.

Biden's precedent cuts both ways for Anthropic. It makes it harder for the company to argue the DPA doesn't reach AI at all. But establishing that AI falls within the statute's scope doesn't mean every demand is lawful. The range of possible demands under Title I is enormous, and the legal analysis is different for each.

The statute, 50 U.S.C. § 4511(a), outlines the DPA's main compulsion authority, and it does two functionally different things. The first is queue-jumping: The president can "require that performance under contracts or orders ... which he deems necessary or appropriate to promote the national defense shall take priority over performance under any other contract or order." Applied to AI, this means the government jumps to the front of Anthropic's application programming interface queue—priority access to the same product everyone else gets, just first. This is the DPA's workhorse, used regularly for defense procurement, including software and IT services. This authority is well established and not at issue in the current dispute.

Section 4511(a)'s other authority—compelled contracting and allocation—goes much further. The DPA authorizes the president to "require acceptance and performance" of contracts by anyone he finds capable, and to "allocate materials, services, and facilities in such manner, upon such conditions, and to such extent as he shall deem necessary or appropriate to promote the national defense." Together, the compelled-contracting and allocation powers let the government potentially force a company to accept new work and control the distribution of goods and services. During the Korean War, the government used it to ration aluminum, copper, and other critical materials between military and civilian production. During the coronavirus pandemic, both the Trump and Biden administrations used it to redirect medical supplies, vaccines, and test kits to domestic production.

But these broader authorities are largely untested. As James Baker, former chief judge on the U.S. Court of Appeals for the Armed Forces and now a law professor at Syracuse University, has documented, the allocation power has barely been used since the Korean War, even though the broad language is "the sort ... executive branch lawyers sneak into legislation when no one is watching."

Two Demands, Two Legal Questions

The legal analysis depends on what the government actually demands, and two possibilities stand out. Anthropic's contract with the Pentagon includes usage-policy restrictions—contractual guardrails that prohibit applications such as autonomous weapons and mass surveillance. The Pentagon originally agreed to these terms. But in January, Hegseth's AI strategy memorandum directed that all Defense Department AI contracts incorporate standard "any lawful use" language within 180 days—a direct collision with Anthropic's restrictions. The government might now demand that Claude, Anthropic’s frontier AI model, be provided without those contractual guardrails, while leaving the model itself untouched. Or it might go further and demand that Anthropic retrain Claude to strip the safety restrictions out of the model entirely.

Two legal questions determine the strength of the government's position. The first is statutory: Does the DPA authorize the government to compel a company to provide a product it doesn't currently make, or only to redirect existing products on new terms? Baker notes that government agencies including the Federal Emergency Management Agency and the Department of Homeland Security have taken the broad view—companies can be forced to accept contracts for products that they don't ordinarily make.

But, as Baker notes, the text doesn't go that far. "If indeed acceptance of contracts for products a company does not ordinarily supply is intended to be required by the DPA," he writes, "it ought to be clearly stated in the law." It isn't. The major questions doctrine, used recently by the Supreme Court to strike down the core of the Trump administration's emergency tariffs, cuts the same way: Courts are skeptical when agencies claim vast authority from ambiguous statutory text.

To be sure, the DPA is an explicit economic-direction statute—Congress clearly authorized some kinds of compulsion. But the further a demand strays from priority access to existing products, the harder it becomes to defend under that doctrine. And the implementing regulation, 15 C.F.R. § 700.13(c), partially supports the narrower reading: by default (unless the Commerce Department directs otherwise), companies may reject rated orders for "an item not supplied or for a service not performed."

Whatever the answer to the first question, there is also a second one: For each specific demand, does the government ask for an existing product or a new one? If a demand just changes the terms of sale for an existing product, the government can draw on the allocation authority's sweeping language about conditions. If it amounts to demanding a new product, the government runs into the ambiguity Baker identified.

Claude Without Contractual Restrictions

The demand most likely at issue is that the government wants Claude without Anthropic's contractual usage-policy guardrails. Here the characterization question is genuinely contested, and each side's statutory argument flows from how it characterizes the demand.

The government would likely argue that dropping the contractual restrictions doesn't change the product. Claude is the same model with the same weights and the same capabilities—the government just wants different contractual terms. The government has two paths here. Under the priorities authority, it could argue that Claude-without-guardrails is the same product on different terms—and the implementing regulations do permit companies to reject rated orders when the buyer is "unwilling or unable to meet regularly established terms of sale or payment," unless the Commerce Department directs otherwise. Alternatively, the government could invoke the allocations authority, whose sweeping language lets the president allocate services "in such manner, upon such conditions, and to such an extent as he shall deem necessary or appropriate" (emphasis added).

Anthropic would likely argue the opposite: that its usage restrictions are part of what Claude is as a commercial service, and that Claude-without-guardrails is a product it doesn't offer to anyone. On this view, the government is asking for a new product, and the statute doesn't clearly authorize that.

As Charlie Bullock, who has previously analyzed how the DPA applies to AI systems, observed, neither side's argument is "a slam dunk." But the sheer breadth of the DPA's allocation language arguably tilts in the government's direction.

Forced Retraining

The more extreme possibility would be the government compelling Anthropic to retrain Claude—to strip the safety guardrails baked into the model's training, not merely modify the access terms. Here the characterization question seems easier: a retrained model looks much more like a new product than dropping contractual restrictions does. Admittedly, the government has a textual argument in its favor: the DPA's definitions of "services" include “development … of a critical critical technology item,” and the government could frame retraining Claude as exactly that. Whether courts would accept that framing, especially in light of the major questions doctrine, is another matter.

The closest analogy is the FBI's attempts in 2015 and 2016 to compel Apple to write custom software to unlock iPhones. Those cases arose under the All Writs Act, not the DPA, and this distinction matters in that the All Writs Act is a gap-filling procedural statute; courts were skeptical precisely because it provided no substantive authority to compel production. The DPA is an express grant of compulsion power, which means the government's legal footing would be stronger here than the FBI's was. Still, the analogy is instructive: Even with a substantive statute, courts may balk at stretching compulsion authority to force a company to build something fundamentally at odds with its own product. Specifically, in one such case, a magistrate judge in the Eastern District of New York denied the government's request, concluding that the government was asking the court to "give it authority that Congress chose not to confer."

Forced retraining would also raise novel First Amendment questions. If model training decisions are editorial choices, then forcing Anthropic to strip its model's guardrails on autonomous weapons and mass surveillance compels the company to express values it rejects. This question is genuinely unsettled. Some have argued that AI companies exercise First Amendment rights when they train their models and determine their outputs; others have argued to the contrary. The Supreme Court in Moody v. NetChoice (2024) recognized that some algorithmic curation constitutes protected expression, but several justices expressed skepticism about how far that principle extends.

* * *

If Anthropic does resist a formal DPA order, the most likely path, as Bullock noted, would be to comply under protest (given the DPA provides for criminal penalties for noncompliance) and immediately seek a temporary restraining order—which courts can sometimes grant within days.

But Hegseth doesn't need to win in court—he just needs the threat to be credible enough to change behavior. All this legal uncertainty may itself be the point. As Baker notes, "Sometimes the availability of potential authority is sufficient leverage to achieve a result through consultation without invoking the law." Other leading AI companies reportedly have already agreed to the Pentagon's terms for unclassified work (and Elon Musk’s xAI for classified work), leaving Anthropic isolated.

The ironies of the government's positions are worth noting. Even Biden's far more modest DPA use—Title VII reporting requirements—drew sharp Republican criticism. Yet Hegseth now threatens Title I compulsion—orders of magnitude more coercive than the reporting requirements that Republicans called "overreach." And of course, it is difficult to square Hegseth's characterization of Anthropic as a "supply chain risk" with the premise of a DPA order: that Anthropic's technology is so essential to national defense that the government must compel access to it.

But the deeper problem continues to be that this fight is happening because Congress hasn't set substantive rules for military AI. If Congress had legislated guidelines on autonomous weapons and surveillance, Anthropic would likely be far more comfortable selling its systems to the military—and the DPA threat would have never arisen. The question of what values to embed in military AI is too important to be resolved by a Cold War-era production statute.