AI Verification: Infrastructure for Prosperity, Governance, and Peace

New verification tools could make AI governance credible without requiring states or firms to expose their secrets.

.jpg?sfvrsn=bcbf98c2_5)

Whether you’re checking that a child has brushed their teeth or that your nuclear-armed foe is abiding by an arms control agreement, much of civilization revolves around our ability to determine whether others are following rules. While governments needed to build many tools to control nuclear arms, parents needed nothing similar for monitoring tooth-brushing. Why? Primarily because it’s (usually) fairly obvious whether a child is or was brushing their teeth (no matter how hard they try to fool their parents). But unlike with children, information about the behavior of a nuclear-armed state might be incomplete, sensitive, and deliberately obfuscated.

Unfortunately, verifying the governance of artificial intelligence (AI) appears to be on the more difficult end of this spectrum. Policymakers and users alike might want AI governance for myriad reasons, but the scale of the industry, the complexity of AI technologies, and geopolitics all increase the difficulty of creating serious AI agreements and then verifying that they are being followed. Building on the findings of a report on international AI verification, I contend that verifying behavior in the domain of artificial intelligence is possible, but it is not trivial. The problem of AI verification generally looks less like overseeing a child’s dental care and more like arms control.

Before we get into how AI verification might be done, it’s worth highlighting some of the reasons why we might want this capability. Among other things, AI verification enables three major outcomes: prosperity, peace, and safety.

Prosperity in the age of AI might depend primarily on our ability to demonstrably build (and deploy) powerful and trustworthy AI systems that abide by agreed-upon rules. Individuals, companies, and countries are less likely to buy products that they cannot trust. An AI company might be much more successful if it can show that independent (and ongoing) scrutiny of its products shows that it abides by the law, protects (or deletes) customer data, and robustly performs at a measured level of quality.

Peace remains possible as long as it looks preferable to war. Maintaining this balance can be the central challenge of statecraft, and it includes questions of deterrence, assurance, and arms control—all of which depend on the availability of credible information. For example, preventing war might require credible evidence that civilian AI resources are not being militarized, or that very specific kinds of AI—such as superintelligence—are not being built.

Safety is also desirable. AI appears to be powerful enough to do significant harm if it falls into the wrong hands, or if it is allowed to run amok. It’s important to protect against this. Corporations might also rightly fear that an AI disaster—the damage of which could be akin to Chernobyl or Three Mile Island—could put their future in jeopardy regardless of where the disaster took place or who caused it. Industry-wide safety and security rules can mitigate these risks, but ensuring a level playing field would require robust evidence of compliance—acquired through verification—so that no one can secretly violate the rules to gain competitive advantage.

So if we want to build a brighter future with AI while guarding against known problems, we must be able to build and deploy AI systems that are verifiable. We must be able to demonstrate what was done (or not done) in the creation and deployment of an AI system. Achieving this is challenging. The AI value chain includes several entire industries, including cutting-edge semiconductor fabrication, cloud computing, frontier AI developers, and everyone who uses AI. These industries are so large and variegated that verifying claims about them can be daunting to say the least. Worse still, most players in this value chain have economic and political reasons to severely restrict the availability of information about what they are doing. States, for example, fear that other states can exploit vulnerabilities in their industrial or military systems. Companies, in turn, fear that their competitors will gain access to the core insights undergirding their competitive advantage. Broadly speaking, competition among states and corporations places limits on their willingness to reveal information.

Remarkably, there may be a solution to this problem. Decades of work in cryptography and computer science has unearthed “privacy-preserving” computational techniques, which allow computations to be done on private data without revealing that data. In a nutshell, actor A can run code X against their private data and show the result to actor B in a way that is verifiable by actor B. That is, B can be certain that the correct code was run against the correct data and that the result is valid. Actor A meanwhile has protected their private data, since actor B sees only the results of the computation.

Why does this matter for verification? Because if the U.S. and China agree to an AI oversight treaty, they can verify each other’s compliance without having to spill their secrets. This is a great improvement over prior techniques, which tended to rely on human observation of sensitive data, such as inspectors examining nuclear weapons systems. While humans have broad ability to perceive information around them and recall it later, computer code can be designed to not remember or transmit anything that is not explicitly required for the job at hand. Code can be inspected by all human parties down to the byte and proved to be correct for the purpose, but no such guarantees are possible with human inspectors.

Privacy-preserving verification is already possible for AI. Recently, the nonprofit OpenMined, the U.K. AI Security Institute (AISI), and the frontier AI company Anthropic ran a demonstration in which they showed how code could be mutually inspected and then run against private data from multiple organizations. Their exchange demonstrated how to achieve mutual privacy—where each party has data they do not want to reveal to the other. This experiment and others like it depend on a family of technologies that allow particular computational hardware to support secret computations. One example is confidential computing, which is an industry standard supported by many of the major players in AI hardware and is increasingly available through the newest generations of cutting-edge AI hardware, such as the Nvidia Hopper and Blackwell chips.

Confidential computing is probably enough to get us started with verification. It was designed and implemented by industry players for commercial purposes, but like many computational techniques, it has uses and ramifications that go far beyond its origin story. Thanks to the existence of technologies like confidential computing, it is already possible for AI companies and oversight bodies such as AI safety/security institutes to participate in a process that generates credible insights about AI systems. The approach piloted by OpenMined, U.K. AISI, and Anthropic could be applied at scale today.

There are a lot of advantages to building AI verification capabilities based on existing technologies such as confidential computing, including improvements in governance, corporate credibility, and user confidence. Regulators, for instance, could gain new kinds of credible insight into the creation and operation of cutting-edge AI models. For example, developers could credibly demonstrate that their training data does not contain dangerous knowledge or that their models will not lie to customers and regulators in their chain of thought.

Frontier AI developers could also make more credible claims about the quality and safety of their systems because they would be able to safely provide third-party auditors with more extensive access to their systems. Today, developers generally provide evaluators only with “black box” access to their models—the ability to ask questions and receive responses, but not inspect important details such as training data, algorithms, or model weights. With confidential computing, frontier developers could safely allow deeper access to their systems and thus enable much more detailed and credible scrutiny.

Users of AI—be they individuals, companies, or countries—could gain additional information about AI model quality and corporate practices. For example, customers could know precisely which model is interacting with them or gain more confidence that their data is not being copied against their will. Overall, the industry could be more credible in the eyes of consumers, and AI governance could define clear, verifiable, and enforceable rules.

While verification schemes centered on confidential computing are probably good enough for low- to medium-sensitivity AI work such as commercial models, one cannot assume that they are sufficient to protect extremely sensitive data such as state secrets (or the formula for Coca-Cola). The crux of the matter is that mechanisms such as confidential computing were not designed to ward off circumvention attempts by the great powers. If a competent cyber state such as the United States or China tried hard to break current implementations of confidential computing, it might be foolhardy to bet against them. This raises the question of what kind of verification scheme could actually be trusted with protecting some of our greatest secrets.

One potential solution is the idea of verifiable confidential computing—computing that perfectly protects an actor’s privacy while also giving them the ability to later prove what they did. Broadly speaking, if we’re able to build something like confidential computing, which is drastically more trustworthy and secure, we might be able to not only solve AI verification but also greatly expand the potential for verification systems in general.

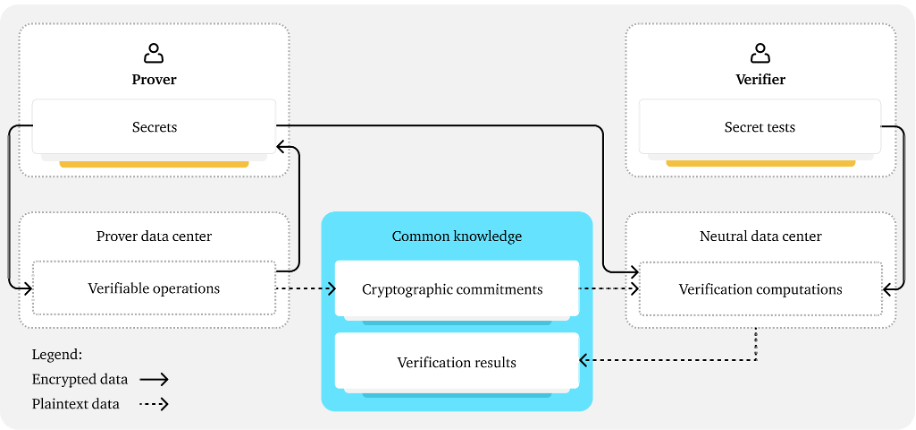

One potential way to achieve verifiable confidential computing is to combine the use of cryptographic commitments (a cryptographic technique that allows data to be securely identified without revealing any of the data) with a secure neutral data center (an extremely secure data center that is mutually overseen by both sides). As demonstrated in Figure 1, the Prover (who wants to privately run computations and then later demonstrate how their computations abide by rules) can run their computations on hardware that generates cryptographic commitments in a way that is credible for the Verifier (who wants to check that the Prover’s computations are following rules). Later, in a secure neutral data center, the cryptographic commitments can be used to reliably identify whether the Prover has provided the true data from their original computations. Within the neutral data center, verification computations can run in a privacy-preserving way and generate verification results that demonstrate whether the Prover’s computations and data adhere to the expected rules.

Figure 1. One approach for verifiable confidential computing. Source: Harack et al., “Verification for International AI Governance,” 2025.

This cryptographic dance allows the Prover to credibly demonstrate that they are following rules while also protecting the security of both sides. Obviously, the Prover wants to keep their computations secret. Less obvious is the fact that the Verifier might also need to keep parts of their tests secret. The Verifier’s test content might contain secrets that are important in their own right (for example, a test that examines a model’s insight into biological weapons may itself require such knowledge to operate). Furthermore, if the Prover knows the detailed content of the tests they are about to face, they can “teach to the test” and produce computations that pass the tests while defying their intent. In essence, the Prover would be empowered with the knowledge to adhere to the letter of the law while violating its spirit. Mutual privacy can address this concern.

If we can build a verification system that is capable of protecting our greatest secrets, the benefits for AI users, companies, and countries could be immense. Users could employ AI more confidently in broader and more sensitive domains because they would be able to rely on the findings and oversight provided by competent Verifiers. Companies could credibly demonstrate that their models are high quality and abide by strict rules. Moreover, companies could benefit from reduced compliance costs because a single verification stack could demonstrate their compliance with a host of different rules, including corporate standards, domestic law, and international agreements. AI-exporting states could provide fine-grained and scalable governance of AI that supports a vibrant AI ecosystem. AI-importing states could buy AI services that come with strong security guarantees (such as the service’s ability to prove that it cannot retain any data).

The great powers may even employ systems such as this for arms control so that they can maintain peace even through turbulent times. For example, a state could credibly demonstrate that its civilian computational resources are actually being used for civilian (not military) purposes, or that it is not building a category of AI that may have been deemed unacceptable to the international community, such as a superintelligence. The militarization of civilian resources is seen as one of the key dangers of proliferation of dual-use or general-purpose technologies—exemplified in efforts to peacefully manage industrial technology in Europe following World War II (such as the European Coal and Steel Community) and the governance of civilian nuclear technology via the International Atomic Energy Agency. Hypothetically, the prospect of superintelligence could present even starker dangers to the international community, such as a permanent loss of human control or decisive (and permanent) military advantages for the controlling state. The likelihood of these dangers is unknown, but AI verification presents an opportunity for humans to prepare for them.

The extraordinary pace at which AI capabilities are advancing requires that society move rapidly to make a purposeful effort toward creating a bright AI-enabled future. If we want the economic and political benefits of AI verification, serious efforts need to begin now. Our report outlines some first steps. An initial verification ecosystem can be fostered by AI users (including governments and corporations), the AI industry, and leading states—all using existing technology. A more complete and robust ecosystem will require a few years of research, engineering, and policymaking. Luckily, the significant utility of AI verification should mean that there are significant incentives to make it happen. If all goes well, civilization will build AI verification for the same reason it builds other essential infrastructure—enabling us in the pursuit and preservation of all of our aspirations.