China’s Agentic AI Controversy

AI agents have sparked an urgent debate in China about data privacy and security that holds huge lessons for the U.S. and the future of AI everywhere.

A powerful new artificial intelligence (AI) agent called OpenClaw and Moltbook, a social networking site just for AI agents, has rocked the tech world with fear and excitement about what an AI future could look like. Just three months earlier, China gave us a glimpse of that future with its own controversy erupting over the first-ever smartphone with an AI agent embedded in the operating system. These developments have unleashed fierce debate in China and around the world about the security and privacy trade-offs that come with the expansive permissions necessary for agentic AI to succeed. The outcome of these debates in China will have ripple effects on AI everywhere.

The Doubao AI phone quickly became among the hottest products in China’s fiercely competitive market. “I got my hands on one,” a friend in Beijing boasted late last year. He had bagged a limited edition ByteDance ZTE AI smartphone, also called the Nubia M153, released to much fanfare in China in early December 2025. Nothing like it exists beyond China, and it is upending the way that users interact with their devices, and revolutionizing how information flows among apps. “It has ushered in a profound transformation when it comes to control of traffic entry points, the boundaries of data security, and the future paradigm of human-computer interaction,” proclaimed a readout from a special forum held in Beijing days after the phone’s release.

Indeed, the AI phone has caused an uproar in China. Within days, many of China’s biggest apps blocked the Doubao phone. They saw it as a serious risk to data security. Built into the operating system of the phone itself, it has a kind of master key that gives the embedded AI agent blanket access to the screen, all app content, and the ability to tap or click as if it were a user. Critics dubbed the agent a “burglar” with “god’s fingertips” increasing risks of malicious input and intrusion attacks by criminal actors. For the banks, it was impossible to distinguish actions taken by the agent and those of the user, creating myriad vulnerabilities for fraud and hacking.

But defenders dismissed concerns as competitive kvetching in a battle for commercial dominance and control of traffic and data. The Doubao phone has “taken a bite out of someone else’s cake, and may even want to take the whole cake away,” pointed out Sang Jitao, a Beijing Jiaotong University professor, at the Beijing forum.

Other businesses also repelled the Doubao phone. Alibaba’s e-commerce site Taobao blocked it, as did its payments arm, Alipay. China’s most ubiquitous superapp, WeChat, followed suit, saying the phone triggered its high-risk security controls. Owned by Tencent, WeChat is essential to communicating with anyone in China, a kind of universe unto itself with its own bookings, payments, e-commerce, and more.

With the Doubao phone’s release, two of China’s most influential internet behemoths are at loggerheads. Will anybody purchase the phone if it means sacrificing services essential to existing in Chinese society? Will Tencent back down and give ByteDance all the user internet traffic and the data formerly walled off inside WeChat?

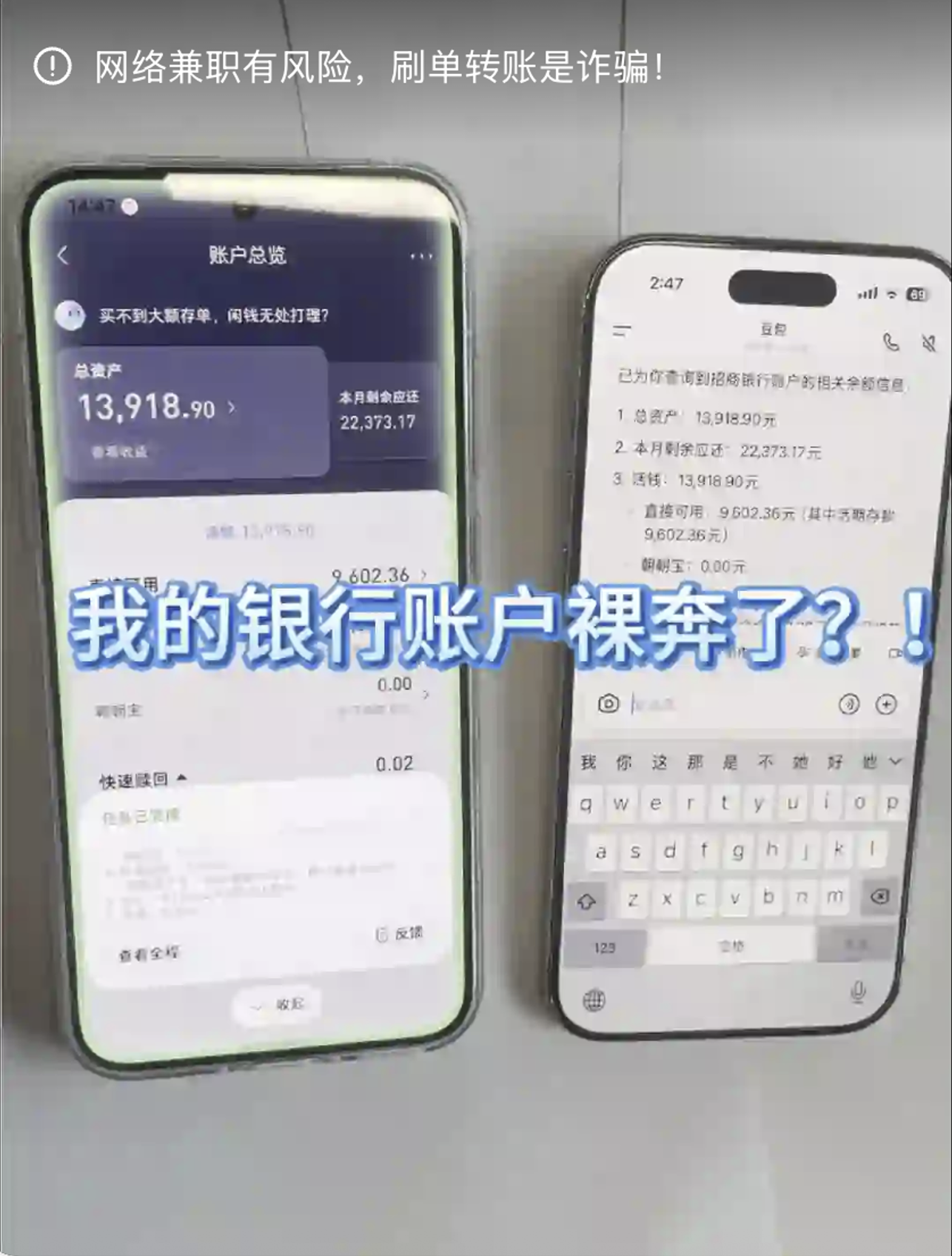

The Chinese public too are sounding the alarm. What would the Doubao phone mean for how their personal information is seen, where it is saved, and who has access to it? A viral video showed one user finding that their bank balance was visible not just on the Doubao phone, but in a mirror of it on other devices where they were also logged into Doubao AI. Videos rippled across the Chinese social media platform Little RedNote showing other users experimenting with the Doubao phone to find out that their private financial data was easily accessible the same way (including card balances, outstanding bill balances, digital RMB wallets, digital wealth management accounts, etc.). (The original video has been removed, but the Chinese social media site Little RedNote contains a post with a screen recording of the full video; see image below.) Was the Doubao phone somehow sending personal data to the ByteDance cloud to train its AI? What were the boundaries between the data on the phone and the cloud, and who would determine access? And what of bystanders whose data was just part of shared chats or files?

Little RedNote screen recording of a viral video (since removed) on Chinese social media showed one user finding that their bank balance was visible not just on the Doubao phone, but in a mirror of it on other devices where they were also logged into Doubao AI. “My bank account was leaked?!”

While the phone is coveted by Chinese consumers, it has sparked a flurry of discussion among China’s legal scholars and technologists about how regulators should address the unprecedented challenges agentic AI poses to data security and privacy. Moreover, it illustrates how risks to privacy can become tangled in other objectives, like consolidating commercial power and monetizing traffic and data.

No matter where one stands on the question of commercial challenge or data security risk, the controversy surfaces a much bigger debate in China and around the world: who owns the data generated on our devices, and who gets to decide where the guardrails belong? And the frenzy the Doubao phone unleashed speaks to the subject of my forthcoming book (Chicago, 2027): How can society balance the prizes and perils of open data when trust is in crisis?

Agentic AI could transform who and what has access to user data and internet traffic, fundamentally shifting the center of gravity away from apps. With the Doubao AI phone as a test case, Chinese technology experts are now grappling with vexing questions about interoperability and power within the nation’s tech ecosystem. Amid China’s fiercely competitive technology landscape, Chinese internet platforms, smartphone manufacturers, and state-owned telecommunications firms are battling to shape standards in agentic AI. The outcome will affect how users in China and beyond interact with Chinese AI.

This debate within China holds lessons good and bad—for governing data inside the United States and for how AI may play out worldwide. At a moment when security experts in the U.S. are calling out the “lethal trifecta” of risks over OpenClaw, China’s experiment provides a lens into the trade-offs in data privacy and security that could accompany the shifts to agentic AI. Chinese and western experts sounding the alarm over the “profound” issues raised for security and privacy. Agentic AI is “threatening to break the blood-brain barrier between the application layer and the OS layer by conjoining all of these separate services [and] muddying their data,” Signal President Meredith Whitaker warned onstage at the SXSW conference last year.

When I visited Beijing just after the Doubao phone’s release, Chinese industry contacts told me that regulators were observing the phone in the wild before deciding on what form government intervention might take, and this may have been the goal: to create a test case first before regulating. Will the government intervene to mediate among its warring tech platforms as control over data and traffic is up for grabs? What new guardrails will be built in response to concerns voiced by citizens and scholars?

One thing is clear: The next chapter of the AI story isn’t just about chips or a single app. It is also about access to data, control of traffic, and permissions for agents to work seamlessly across fragmented landscapes of edge devices and services.

Agentic AI and Its Discontents

Agentic AI systems get tasks done with little human supervision. They operate proactively, across different environments, and take many steps autonomously before needing a human nudge. According to Tsinghua University Professor Chen Tianhao, AI agents directly modify the real-world environment rather than being passive tools. Like “a highly intelligent and efficient intern,” as Caiwei Chen put it in the MIT Technology Review, this kind of AI completes workflows that traditionally required human labor and thought. In China, an AI agent is sometimes called a proxy (

Such assistants might make travel reservations, place food orders, or sort out automatic payments and cancellations. They might engage with different websites or service providers. Or they could be part of a mobile phone’s operating system, saving users from having to open and navigate multiple applications. That would require agents to independently authenticate user identity and credentials to secure transactions—between the user and the relevant application, and between every application or service provider involved.

Agentic AI typically operates as a layer, atop traditional large language models (LLMs) such as ChatGPT or DeepSeek. Unlike a chatbot responding to individual user prompts, AI agents are doers. They take a command, break it down into tasks, and then complete workflows. Asking an AI agent to “plan a weekend of good theater and cheap eats in Beijing” wouldn’t result in an itinerary, or a menu of options weighing the pros and cons of several itineraries, such as a chatbot might provide.

China and the United States are developing technical standards to enable AI agents to work across digital systems. These standards are emerging in ways that both converge and diverge. OpenClaw creator Peter Steinberger recommends running the agent on the models made by the Chinese AI company MiniMax. Many developers in China and the United States use the Model Context Protocol (MCP), introduced by American frontier AI company Anthropic in November 2024. This kind of “invisible infrastructure,” as Matt Steinberg and Prem M. Trivedi explain

Fragmentation Two Ways

One thing China and the United States have in common when it comes to AI agents is that they only succeed if they can operate seamlessly across different applications and connected devices. That kind of interoperability requires broad permissions so that AI agents can do things like access calendars, emails, chat logs, and credentials. But these broad permissions necessary for agentic AI’s development come at a cost to data privacy and security—and this is the crux of the controversy over OpenClaw in the western world and the Doubao phone in China.

Some of these challenges in interoperability and access are unique to China. That is because the nation’s mobile ecosystem is more fragmented than any other. Different apps and programs don’t interoperate or share data. Users can spend all their time within the universe of one without touching another. This Balkanization takes two forms: superapp fragmentation and device fragmentation. Both are big problems for AI agents, which must call on services across multiple superapps and devices.

The first kind of fragmentation is a result of China’s “do everything” apps. These combine the core functions of Facebook, WhatsApp, Amazon, Uber, Google Maps, the App Store, and a bank app in one seamless interface. (Attempts to make American apps like X superapps have not gone so well.) The main superapps are WeChat and Alipay; increasingly Meituan, Doyin, and Taobao have bundle offerings too. Through Alipay, people in China can use a universe of services—each itself an ecosystem—for payments, social networking, ride hailing, and more. (For users outside of China, Alipay works more like a basic digital bank card.)

In many ways, a superapp is like an operating system. Just as developers build for Apple, Windows, or Android in the United States, developers build for WeChat or Alipay in China. And like Apple and Windows, WeChat and Alipay are walled gardens. Designed to lock in user traffic and interactions, they tend not to share data nor offer services that connect externally. Quite the opposite.

What this means for AI agents is that when they go to call on an app to complete a task—for example, accessing the contents of a text message in WeChat discussing plans to meet for dinner—the task will fail without the agent having the ability to read and act on the information held within the app’s walled garden. That is why it was such a big deal when WeChat blocked the Doubao phone through its risk controls.

The second kind of fragmentation that is unique to China in the obstacles it creates for AI agents concerns devices. Across the world, on Android phones, a layer of apps and services called Google Mobile Services (GMS) sits atop the Android operating system. That’s why phones built by Samsung, Google, or Motorola share Gmail, Chrome, Google Maps, and the Google Play Store (which, critically, allows app downloading and centralizes security reviews and contractual guarantees). The GMS layer also powers smartphone capabilities such as firmware updates and GPS tracking.

But China blocks Google. So Chinese smartphone manufacturers that produce Android phones have developed an equivalent to GMS that runs on Android’s open source operating system. Chinese users switching from an Android phone made by one manufacturer to a phone made by a different firm must also switch app stores, cloud services, assistants, push notifications, and a range of other services. Developers, meanwhile, must adapt their software for each manufacturer’s proprietary layer if they wish to market apps in different countries. To add to the confusion, Huawei phones are manufactured and sold with a home-grown operating system, HarmonyOS, with its own services.

Both flavors of fragmentation are anathema to seamless interoperability and, hence, to agentic AI. Chinese agentic AI will only advance if it overcomes these barriers, which is precisely what the Doubao AI phone aims to do. It is no surprise then that its release sparked such a firestorm in China. Yet, in the long run, whatever company manages to overcome these barriers in China may be able deliver more useful and powerful AI. The difficulties have prompted Chinese scholars, companies, and engineers to build new technologies and debate policies and standards, some unique to China, and some relevant around the world.

Regulating AI Agents

In this context, a battle is unfolding within China to shape the regulations and standards for agentic AI. Stakeholders include the internet platforms that own the superapps, those with the AI agents, device makers, and state-owned telecommunications companies that double as cloud providers. Winners will craft the guardrails for data access, security authentication, and more.

Things are evolving quickly, sometimes in different directions. Just weeks before the Doubao phone storm, WeChat users in China were reporting that Tencent may have blocked Huawei’s AI agent, called Xiaoyi or Celia, from starting calls. At the same time, some superapps may be taking steps to give agents greater access. Alipay launched a “super portal” called Zhixiabao that allows AI agents to access functions like food delivery and financial services from within its mini-programs. Alipay promotes Zhixiabao to both Android and iOS users as an “AI Life Manager.” Other companies are more cautious, wary of losing data and traffic to the agents.

A separate power struggle over standards for agentic AI is between state-owned telecommunications companies (e.g., China Mobile, China Telecom) and private internet and cloud platforms (Alibaba Cloud, Tencent Cloud). In China, telecommunications firms also function as state-owned cloud providers—picture Amazon Web Services with its own cell towers. The kind of data each player has is different. Telecommunications companies hold location data, call patterns, and network behavior. Platforms harvest information on user preferences, social demographics, and financial transactions.

The contest for shaping coming regulations and standards remains open, with major consequences for users’ data security and privacy, control over user data, internet traffic, and commercial power in a rapidly evolving market.

Data Security and Privacy

There is no consensus in China about whether the battle to set the standards for agentic AI is more about commercial power or genuine data security and privacy concerns. Regardless, the Doubao phone has forced a reckoning about whether its powerful capabilities require new government intervention to create guardrails on privacy and security in the era of AI. As Chen of Tsinghua University writes, AI agents fundamentally disrupt the existing mobile ecosystem, raising questions about how China’s existing laws and regulations need to “co-evolve” with developments in the technology. Chinese legal scholars observe that until Chinese regulators determine a path forward, the AI agent embedded in the operating system itself has “amplified the privacy paradox in the AI era,” according to Chen, where “users pursuing convenience unconsciously consolidate information originally scattered across various apps, into the hands of a single system-level intelligent agent.”

As China learns from the Doubao phone, governments, citizens, and industry would be wise to take note as they grapple with solutions for this paradox in their own countries. Indeed, U.S. experts responding to what they observed in the first days of the OpenClaw agent (a general-purpose agent hosted locally on a Mac or virtual environment rather than a phone) express similar concerns to those coming from China. “We’ve spent 20 years building security boundaries around our operating systems,” AI strategist Nate Jones said on his YouTube channel. “But agents require tearing that down by the nature of what an agent is—it needs to read your files, access your credentials …. [T]he value proposition requires punching holes in every boundary that security teams spent decades building. That is the bind. A useful agentic AI requires fairly broad permissions, and broad permissions create a massive attack surface.”

In China, the privacy and security fears are not new but, rather, build on years of a subtle public discourse and a proactive, centralized legal framework in China around individual data privacy. Today’s debates build on nearly a decade of rising demands for privacy among Chinese citizens and scholars.

Concerns about the Doubao phone thus mark the next stage in the evolution of China’s surprisingly comprehensive regime for regulating data. Citizens, scholars, and private-sector technologists fear that advances in AI further undercut data privacy and security protections. They call for regulators to craft new interventions to enshrine citizens’ control over their data as AI agents expand into new corners of daily life while disrupting the balance of power among tech giants.

Personal Information

Some Chinese scholars argue that changes in how data is collected and used by AI agents could require a fundamental reassessment of foundational concepts in China’s data protection legal framework (which was modeled after the EU’s General Data Protection Regulation (GDPR), sometimes referred to as “GDPR with Chinese characteristics” to capture China’s political system). These concepts include consent, purpose limitation, and minimization as agentic AI could subvert users’ relationship with their personal information. Wang Yuan

Similar to experts in the European Union following the rollout of GDPR, Chinese scholars also are increasingly questioning notice and consent as a viable solution to privacy concerns when it comes to AI. In response, Wang recommends creating a dynamic, participatory type of consent to reflect the “constantly changing nature of data used by AI agents,” but cautions that a welter of pop-up boxes interfere with user experience and become ineffective.

Moreover, the autonomous actions of agentic AI make it “impossible for information processors to determine the ultimate purpose of information collection and use,” Wang points out. He argues against simply patching existing privacy rules, and calls for a new set of approaches to both law and technology. Alternatives include enabling users to customize settings for “dynamic” consent, privacy by design, deleting data automatically after certain time periods, and stronger anonymization and deidentification. China has standards for anonymization and deidentification, but lack of consensus about which data can be reidentified will only intensify with AI advances.

Hacking Accessibility

For AI agents to work on smartphones, apps need to let them in. Many developers are hesitant to provide this kind of access because it can reduce data, traffic, and advertising revenue. To get around this issue, AI agents can exploit accessibility services, originally designed to help people with disabilities to use their phones hands-free, for instance.

A study by the Nanfang Compliance Technology Research Institute revealed that smartphone AI agents enabled accessibility permissions to access all private screen content and perform operations without notifying users. Assistants could see bank card passwords and chat logs, and then click, long-press, and swipe the screen. Researchers evaluated six smartphones running AI agents and interviewed smartphone manufacturers, engineers, and legal scholars in China. (The think tank is affiliated with a media conglomerate in China called the Southern Finance Omnimedia Corp.) Some of the smartphones turned off the accessibility features after completing a task. Others enabled the feature to hail a ride with the Didi app, and left it enabled.

Chinese experts are weighing risks to security and privacy against how essential accessibility services have been to advancing technology. For example, Zhu Yue at Tongji University Law School points out that LLMs have benefited from accessing data from large volumes of videos, images, and text annotations provided by accessibility services. He writes that these issues around accessibility are an overlooked area of AI law: “Simply by crawling multimedia content and their corresponding descriptions, AI enjoys a free lunch.” This question of how best to make use of these features while protecting sensitive user data and giving users more control over their data is an open question in China.

Privacy experts interviewed for the Nanfang study point to two ways in which users have some degree of control—in theory. There should be notice and consent notifications before accessibility features are activated, and permission switches that allow users to monitor and control that access. However, the study found that in the smartphones they reviewed, the “situation is rather chaotic.”

Access All Areas

The Doubao AI phone has entered unchartered territory, out ahead of existing legal or standards regime in China or globally. It is also is unique in its level of access. While the Samsung Galaxy S26 series smartphone has been dubbed in Chinese media as the overseas version of the Doubao phone, there are differences in the kind of system permissions each has.

In the Doubao phone, the AI agent comes fused into the operating system (OS). It is an elevated OS systems-level permission called INJECT_EVENTS that reads and interprets the screen and clicks buttons in ways that are indistinguishable from a human user. . (The Samsung Galaxy uses a hybrid approach where it mainly uses API access granted by the top 200 apps in the app store, but is developing a fallback framework to read and click mimicking human.)

The Doubao phone’s system-level symbiosis is only possible through ByteDance’s partnership with the device manufacturer ZTE. ByteDance is now exploring partnerships with Lenovo, Vivo, and other device makers. It is INJECT_EVENTS that enables the Doubao agent to read screens, navigate the user interface, and click without having been granted access to the application programming interface (API) of an app. “It does not ask apps for cooperation. It simply moves through their interfaces as if it were the one holding the device,” write Boyuan Wang on his substack.

There is no rule preventing more phone manufacturers from granting signature-level permissions like INJECT_EVENTS for AI. And Bytedance has stated that it only uses the permission with explicit user consent. All of which begs the question: What kind of personal information—screenshots, texts, and so on—does the phone “see and remember” after completing tasks? What remains on the device, and what is transmitted to ByteDance for reasoning? One industry expert explained in the Southern Metropolis Daily that data is sent to the cloud and processed by the model for inference, but not stored, and data from new tasks overwrites previous content. Users must enable the phone’s global memory function (it’s off by default) for the Doubao agent to remember their preferences. For example, when enabled the agent will remember that the user likes their coffee iced without sugar, and this is stored on the device side. When a task is assigned involving planning and reasoning, images from the screen for each step are uploaded to the cloud for processing, but not stored on the server side nor used for model training.

Seeking a Path Forward

In the wake of the Doubao phone storm, early recommendations for new guardrails are already circulating as regulators take stock of the “contest for empire” among platforms as well as concerns being raised by citizens through platforms like Little RedNote. None of this is resolved but will have sweeping implications for the distribution of commercial power as well as what consumers of Chinese AI in China and beyond will experience.

When it comes to sensitive credentials and transactions like having AI agents access payments and fund transfers, Chen of Tsinghua recommends creating standards (possibly mandatory regulations beyond industry guidelines) where Doubao would detect a higher risk action and automatically suspend the AI takeover to return control to users. Meanwhile, a financial technology industry alliance in China has already published recommendations for financial applications of agentic AI in response to the controversy with preliminary guidelines on data processing and case studies for how financial institutions are building and integrating AI agents.

Another recommendation is to require local on-device processing of particularly sensitive information like the contents of chat logs and photo albums data rather than being sent to the cloud. Currently it is not clear what data remains on the device and when it is sent to the cloud, as the viral video showing bank account information uncovered. Specific scenarios could be graded according to risk, perhaps similar to the way in which China’s regulations for cross-border data transfer already assign different risk levels to different types of data when it comes to requiring local storage or allowing transfer overseas.

Others are proposing viewing agentic AI through an antitrust lens. China’s Anti-Monopoly Law prohibits certain kinds of data acquisition (“traffic hijacking”). AI agents could be regulated as digital gatekeepers. For example, Doubao AI could not direct users to the Douyin e-commerce, which is also owned by ByteDance.

Here, Unevenly Distributed

AI agents strain existing laws and safeguards by connecting multiple services and automatically sharing data, making it seemingly impossible to determine who decides how data is used and who controls it. Data privacy and security concerns in China are similar to those being raised by U.S. civil society organizations. “Moving from a chatbot responding to prompts to an AI agent acting on our behalf would require a major leap in data access and memory standards,” write New America’s Steinberg and Trivedi. Should regulation require such agents to receive authorization from third-party apps based on a set of criteria negotiated between companies? Or since the data belongs to the owner of the device where the agent resides, who has already tasked the agent to act on their behalf with the data, then perhaps no additional permission should be required? By this logic, user intent is already granted.

Until late last year, such recondite questions about interoperability and control were largely academic. Now that there are real-world test cases for these issues the debate has taken on a febrile urgency. Not least because ByteDance may target overseas markets next for its AI phones. Industry experts believe that when the technology is better understood, China will come out with new standards and rules for it. The implications will be far-reaching.